Resource Guide

AI Maturity Guide for Development Teams

AI has made significant progress over the last 6 months and its impacting how software is being made across all stages. In this guide, we walk through Squig's AI Development Maturity Model for Product Development and Organizations.

AI skeptics: AI isn't good enough right now

There's a lot of hype about what AI can do and there's a lot of incentives from frontier companies to sell you AI as the next best thing. Many people have tried AI for work and felt that it wasn't ready and have disregarded it. This would've been fine for any other technology but the state of AI changes significantly every three months and so its important to update your priors. If the last time you tried AI was in mid-2025, the landscape has significantly changed.

Software Projects by AI

Bun is an all-in-one JavaScript and TypeScript toolkit designed to be a significantly faster, more modern replacement for Node.js

JavaScript Runtime: Massive ~960k LOC Rewrite to Rust with Claude. Jarred Sumner (Bun creator, now at Anthropic) experimented with rewriting Bun from Zig to Rust. It was done in just ~6 days.

View ProjectA team used 16 parallel Claude instances to build a full C compiler (~100k lines of Rust) from scratch. It compiles the Linux kernel with no active human supervision. Agents coordinated via file locking and git sync like a remote dev team. Cost: ~$20k over 2 weeks (2B input tokens, 140M output on Opus 4.6). This highlights swarm/agentic scaling for big projects.

Project announcement and details:

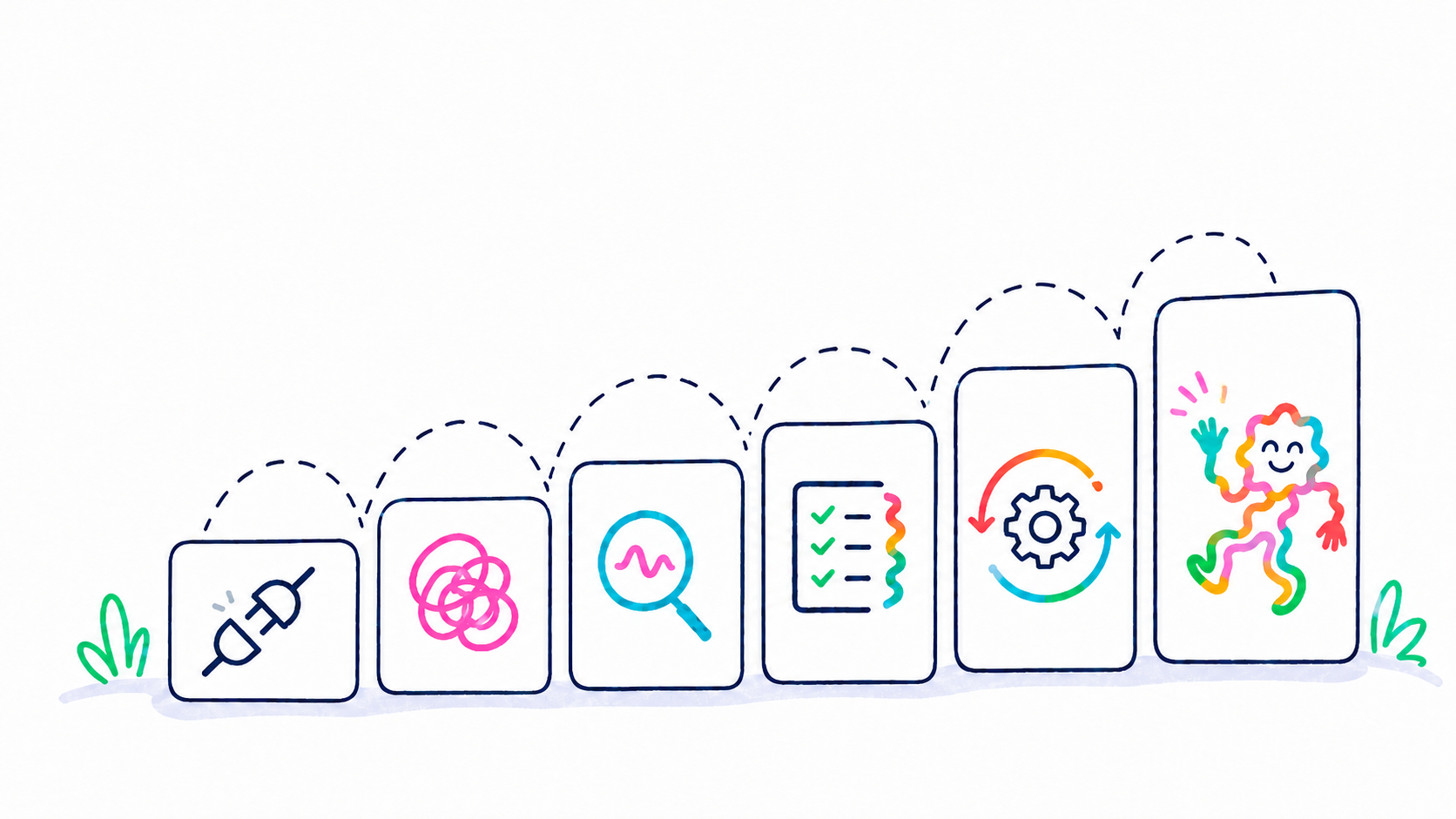

View ProjectGPT-5.3-Codex (high-reasoning variant) ran uninterrupted for 25 hours to build a sophisticated design tool Design Desk. Used durable project memory via .md files (goals, plans, architecture, implementation). Handled long-horizon coherence across ~13M tokens.

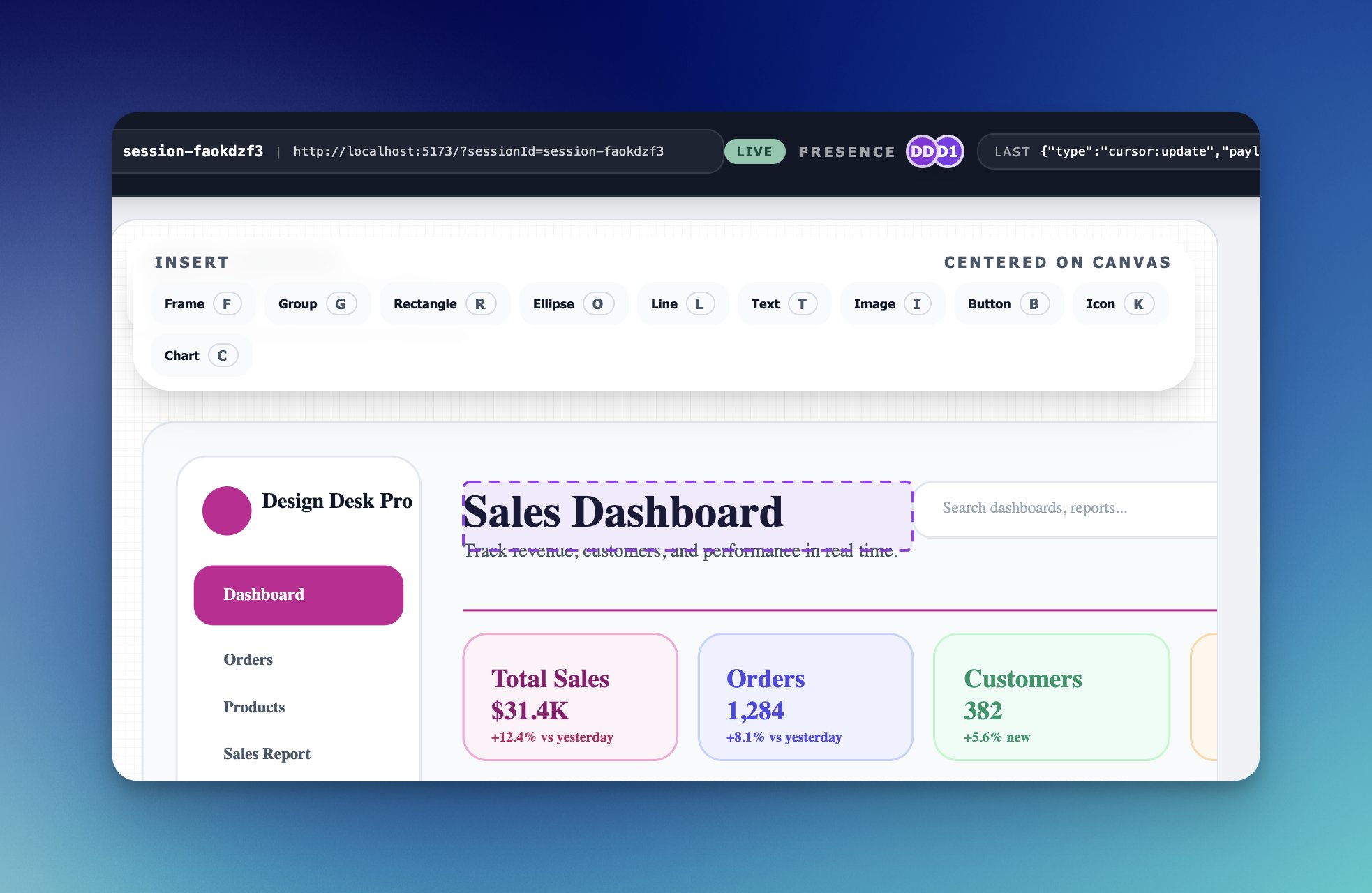

Verifpal is a cryptographic protocol verifier rewritten from Go to Rust with AI assistance.

OpenClaw exploded from a simple CLI tool into over half a million lines in its first 80 days alone, averaging over 6,400 new lines of code per day, largely fueled by AI generation. Has accepted 50k commits in 5.5 months, ~300/day.

View Project

AI will only accelerate existing behaviors.

One of the key benefits of AI is accelerating what you are doing. For a great engineering organization, AI will accelerate its output. If the organization is shitty, AI is just going to accelerate the diarrhea.

Before we talk about building an AI organization, let's briefly talk about what a good engineering organization is about. For this, we are going to use the years of research across thousands of organizations collected by Google under its DORA project. In summary, DORA identifies five key metrics that show what great companies perform on:

State of AI-assisted Software Development 2025

DORA's research includes more than 100 hours of qualitative data and survey responses from nearly 5,000 technology professionals from around the world

DORA has boiled down the following metrics to measure high performing organizations:

Throughput

Throughput measures how quickly changes move through your delivery system into production. DORA breaks throughput into three metrics:

1. Lead time for changes

The amount of time it takes for a change to go from committed to version control to deployed in production.

2. Deployment frequency

The number of deployments over a given period or the time between deployments.

3. Failed deployment recovery time

How quickly can you recover from a failed deployment or incident.

Stability

Stability measures how reliable your delivery process is and how quickly you can recover from failures. DORA breaks stability into two metrics:

1. Change failure rate

The percentage of deployments causing a failure in production that requires remediation (e.g., a hotfix, rollback, or patch).

2. Failed deployment recovery time

How long it takes to restore service after a failure occurs.

Here is how different performing organizations rank against these metrics.

| Performance level | Deployment frequency | Change lead time | Change failure rate | Failed deployment recovery time | % of respondents |

|---|---|---|---|---|---|

| Elite | On demand | Less than one day | 5% | Less than one hour | 18% |

| High | Between once per day and once per week | Between one day and one week | 10% | Less than one day | 31% |

| Medium | Between once per week and once per month | Between one week and one month | 15% | Between one day and one week | 33% |

| Low | Between once per week and once per month | Between one week and one month | 64% | Between one month and six months | 17% |

Rework rate distribution

Survey question: Approximately what percentage of deployments in the last six months were not planned but were performed to address a user-facing bug in the application?

| Rework rate | % at level | Top % |

|---|---|---|

| 0%-2% | 6.9% | 6.9% |

| 2%-4% | 5.8% | 12.8% |

| 4%-8% | 13.7% | 26.5% |

| 8%-16% | 26.1% | 52.6% |

| 16%-32% | 24.7% | 77.3% |

| 32%-64% | 15.4% | 92.7% |

| >64% | 7.3% | 100% |

Where do you stand on the DORA metrics? Take a 1-min self-assessment here

The AI Maturity Model for Product Teams

The problem is that everyone is using AI, but there is a spectrum. At the worst end of the spectrum: a developer can run code, see an error, paste it into ChatGPT, see what to fix, and then go back to fix it manually. At the highest end: the developer uses an agent to describe what has to be done and sees the end result. The impact can be 0.5x to 100x depending on where you land on the spectrum and how you design your organizations.

Developers use AI (ChatGPT, Claude, etc.) instead of Google for quick answers and code snippets.

AI provides inline code suggestions and completions as developers type, similar to GitHub Copilot's original functionality.

Repo + architecture hygiene for AI

Ensure version control, clear module boundaries, consistent patterns, good naming, and low "pit of success" to make your codebase AI-friendly.

Docs for AIs

README per package, details on: "how to run", "how to test", "how to deploy" and any ADRs (Architectural Decision Record)

Deterministic dev environments

devcontainers/Nix, one-command setup. Agents fall apart in flaky envs.

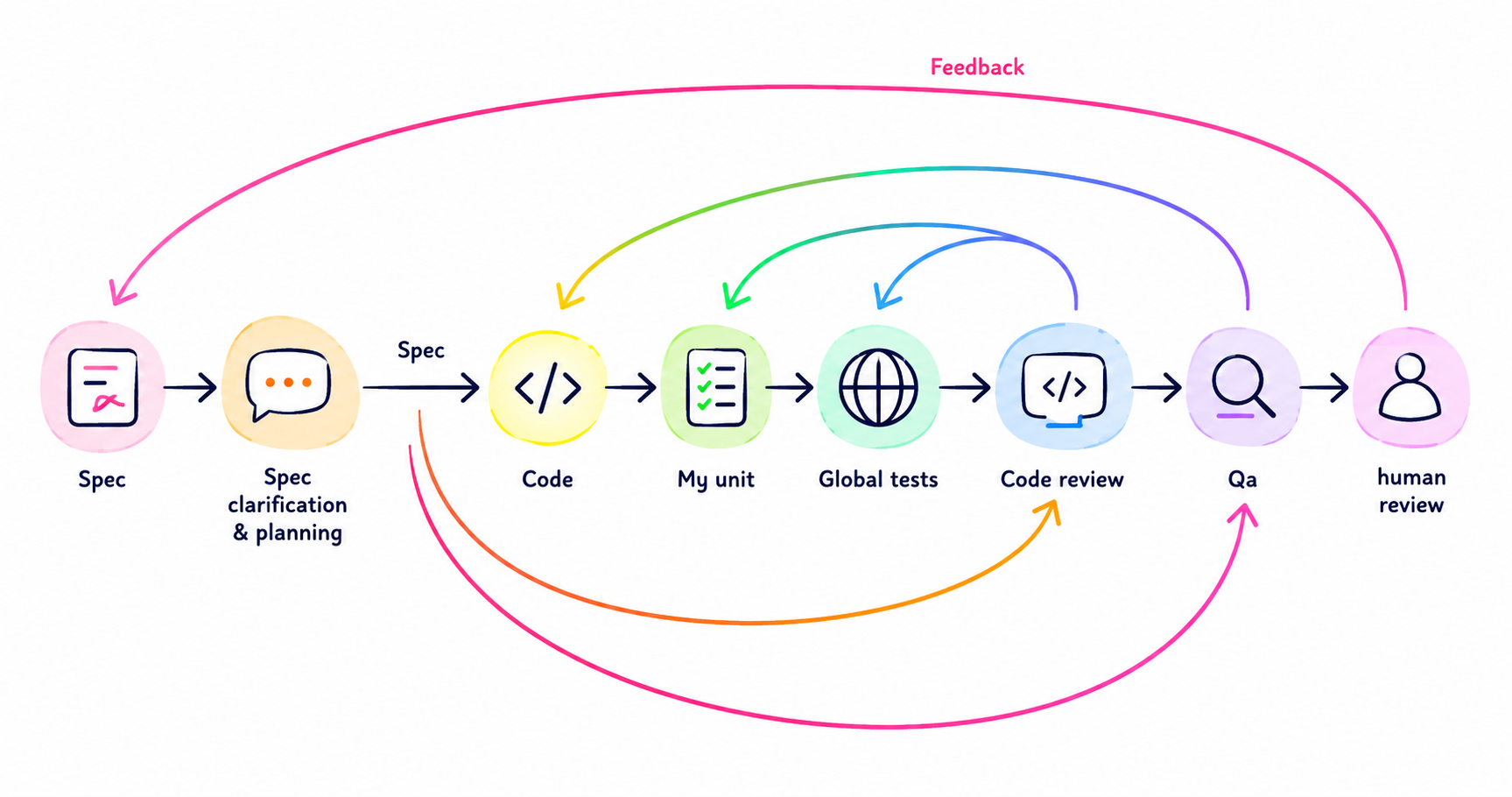

Document your requirements: Create structured PRDs, tech specs, task breakdowns, and acceptance criteria before starting implementation.

Define done: Include tests, telemetry, migration plans, and rollback procedures—not just "code compiles".

Track traceability: Link commits back to spec sections and requirements using task IDs (e.g., PROJ-123) from Jira or Linear.

Unit/integration tests are not enough for AI-generated behavior changes. Add golden/snapshot tests, property-based tests, and (for LLM features) evals.

Project memory (architecture, constraints, conventions) as maintained artifacts. Decision capture: agents write ADR drafts when they introduce new patterns. Reusable playbooks: "how we add a new endpoint", "how we add a new integration", etc.

Bring in broader context: access to product help documentation, issue tracker, internal knowledgebase.

Subagents spawning, third party review tools, security reviews.

AI-powered QA including UI testing and user emulation end-to-end.

Automated deployment pipeline with AI-assisted rollout and monitoring.

Parallelism with git worktrees

1. Error logs, security alerts, metric changes all trigger an agent that researches the issue, generates pull request. Low-risk code changes are automerged.

2. Agents pick up items from a prioritized backlog, perform changes and return playable URL to product manager/designer. Engineering works on harness, making sure agents are able to work autonomously by providing the right context, documentation, details.

Practical recommendations:

- 1

Start small. One stage at a time.

Don't try to implement all stages at once, attempt one-at-a-time.

- 2

Add tests. Build coverage for confidence.

You want to build confidence for changes before you let an agent make them. Coverage is the prerequisite.

- 3

Reduce technical debt.

A lot of debt doesn't get prioritized and slows down engineering and AI. You have to work around debt with lots of exception clauses and .md files explaining why something isn't quite the way it should be.

- 4

Consolidate your repos.

Your code should ideally be in a single repo, or the agent needs access to all repos if a feature touches multiple.

- 5

Remove dead code.

Exclude previous versions from AI context. You want to limit the amount of searching and context building an AI has to do.

- 6

Upgrade frameworks and languages to latest versions.

e.g. from Python 2 to Python 3.14, from Django 2.0 to Django 6, etc.

- 7

Keep your README.md or AGENTS.md lean.

Document non-obvious things not discoverable in your repo. Reference "golden" items: a great function, module, API, etc.

- 8

Capture gotchas and tech debt in your docs.

Add them to your README or AGENTS.md, but work to eliminate them over time.

- 9

Use a secrets manager.

Protect against exfiltration attacks. Ideally your AI should not be able to access secrets directly.

- 10

Set up browser access for AI.

AI can now connect to Chrome's dev tools through CDP and read browser state. With computer use and browser plugins, the AI can test features on the browser itself. Make sure you set these up.

- 11

Moving up the ladder requires cross-functional buy-in.

Development alone can't improve the process. You'll need to work with product management, QA, product marketing, and DevOps. This requires buy-in from leadership that can affect change across the chain.

- 12

As coding time collapses, bottlenecks shift.

Bottlenecks switch to reviews, spec, and architecture. Eventually, with enough codifying of your criteria, these can be automated with infrequent human review.

- 13

Minimize cross-team communication overhead.

Teams move fast when they can take independent decisions. A full-stack developer works faster with AI than a two-person front-end/back-end split.

- 14

Be biased towards planning and spec'ing.

Reviewing outputs after the fact ('I'll see what I get') is slower and more expensive.

- 15

Tokens add up — know your pricing.

The latest frontier models are expensive. As a general rule, models used via Anthropic or OpenAI's own subscription give you 3-5x more tokens than via their APIs. If you use an IDE with an API key, it will not be as cost-effective.

- 16

Benchmark open models.

Models such as Kimi 2.6 are benchmarking incredibly well against Opus 4.6 and are great for many coding tasks. You should benchmark various models and compare the results.

Measuring AI Impact

1If your company is using AI, leadership should have a sense that things are moving fast. There should be a material impact on the business's top and bottom lines. Without that in place, just using AI is an intermediary metric without focusing on the actual end result.

2Within engineering itself, you measure productivity in the traditional ways. Most engineering teams work in sprints and have a sprint velocity, with everything being equal (duration of the sprint and the team members in the sprint). There should be an increase in the story points being delivered. 2-3x is typical but fully AI-pilled companies can run 10-100x speed if you collapse product, design, testing into small teams or even a single person.

3If you're not using story points, you can use other proxy metrics. You should see an increase in the number of commits, PRs, LOCs, or tickets closed. Almost all ticket management software has an AI chat that you can ask to give you a sprint-by-sprint view of tickets/points/PRs closed.

Both of them are using AI, but the agent user is several times more productive. This is not easy to setup and requires infra investment and leadership commitment.

Organizational AI Maturity Ladder

- 1User driven chat: research jon@example.com, look for x, now look for y, now look for z

- 2User uses projects and re-usable skills: research jon@example.com

- 3Reactive: new lead arrives in CRM, runs through enrichement

- 4Proactive: agents plan, search and find leads and run them through qualification process